Or should the importances reflect how much the model depends on each of the features, regardless whether the learned relationships generalize to unseen data?

Zero because none of the features contribute to improved performance on unseen test data? What values for the feature importance would you expect for the 50 features of this overfitted SVM? In other words, the SVM model is garbage. The mean absolute error (short: mae) for the training data is 0.29 and for the test data 0.82, which is also the error of the best possible model that always predicts the mean outcome of 0 (mae of 0.78). If the model “learns” any relationships, then it overfits.Īnd in fact, the SVM did overfit on the training data. This is like predicting tomorrow’s temperature given the latest lottery numbers. I trained a support vector machine to predict a continuous, random target outcome given 50 random features (200 instances).īy “random” I mean that the target outcome is independent of the 50 features. The best way to understand the difference between feature importance based on training vs. based on test data is an “extreme” example. Tl dr: You should probably use test data.Īnswering the question about training or test data touches the fundamental question of what feature importance is. I can only recommend using the n(n-1) -method if you are serious about getting extremely accurate estimates.Ĩ.5.2 Should I Compute Importance on Training or Test Data? This gives you a dataset of size n(n-1) to estimate the permutation error, and it takes a large amount of computation time. If you want a more accurate estimate, you can estimate the error of permuting feature j by pairing each instance with the value of feature j of each other instance (except with itself). This is exactly the same as permuting feature j, if you think about it. Input: Trained model \(\hat\)įisher, Rudin, and Dominici (2018) suggest in their paper to split the dataset in half and swap the values of feature j of the two halves instead of permuting feature j.

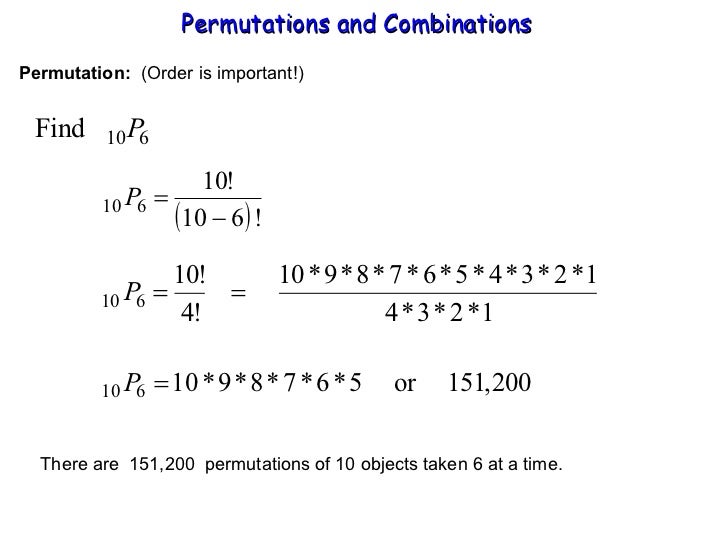

The permutation feature importance algorithm based on Fisher, Rudin, and Dominici (2018): They also introduced more advanced ideas about feature importance, for example a (model-specific) version that takes into account that many prediction models may predict the data well. The permutation feature importance measurement was introduced by Breiman (2001) 43 for random forests.īased on this idea, Fisher, Rudin, and Dominici (2018) 44 proposed a model-agnostic version of the feature importance and called it model reliance. We measure the importance of a feature by calculating the increase in the model’s prediction error after permuting the feature.Ī feature is “important” if shuffling its values increases the model error, because in this case the model relied on the feature for the prediction.Ī feature is “unimportant” if shuffling its values leaves the model error unchanged, because in this case the model ignored the feature for the prediction.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed